January 14, 2024

Author: Tathagata Das

Editor: Manish Verma

Curiosity is the desire to learn about the environment around oneself, a keen interest in understanding the happenings around oneself. Childhood, starting from infant stage to the early school years, is considered the most curious phase of human life. This curiosity is present in a human since birth but where does it start? Where is its origin?

One of the earliest senses in a human foetus that is functional is hearing or audition. A foetus starts to listen to heartbeats and blood flow in the mother’s body as early as 18 weeks and can start responding to external sounds after 22 weeks of pregnancy. This is the start of learning through audition and some of the earliest proofs of curiosity. The curious mind has started forming pathways for processing sounds and building a base for understanding it’s mother’s language.

Recent studies on this phenomenon were conducted by Mariani, B., et al. to understand how much effect prenatal audition has on grasping the mother’s language. The environment inside the uterus gets sounds that are suppressed, but the rhythm and melody of speech are still discernible or preserved. This sound which passes through the uterine walls gives the fetus a specific rhythm and melody of sound that it is more accustomed to. This sound is predominantly the mother’s voice, due to which the mother’s voice is preferred over other female voices. The newborn even shows a preference for the language the mother spoke during pregnancy. All these preferences are formed before birth and then are shaped into more concrete attributes after birth. The study performed had the aim of understanding the neural basis of these phenomena, to understand if the speech stimulation in the uterus changes how a newborn perceives the sounds around it after birth and whether these modulations are specific enough to let the newborn distinguish between languages.

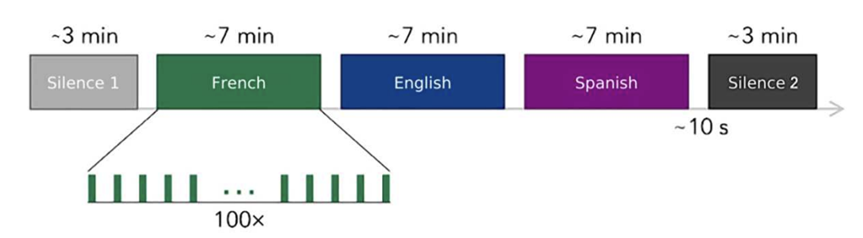

The first part of the study was conducted with 49 French infants of 1-5 days of age. Their neural activity was tracked using Electroencephalography, at 10 sites comprising 5 frontal sites 2 temporal sites and 3 central sites. The resting state activity of the brain was recorded for 3 minutes (silence 1), and then, lines from a children’s story “Goldilocks’ and the Three Bears” were played in a pseudo-random order in the three languages chosen one after the other for 7 minutes each were played as a speech stimulus concluding with another resting state activity recording for 3 minutes (silence 2). The chosen languages were French (mother tongue), English (rhythmically not similar to French) and Spanish (rhythmically similar to French). By comparing the resting states, firstly, we try to understand if the exposure to speech has left lasting changes in the neural pathways that support learning and memory. Secondly, we try to understand if these lasting changes occur for all languages equally or if are specific to the mother tongue.

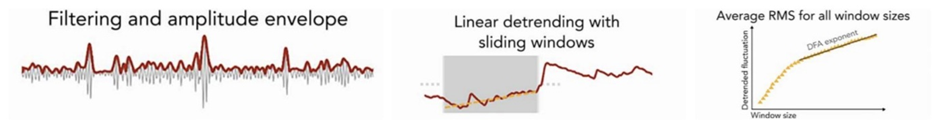

The second part of the study consists of analysis of the recorded data. The EEG data is analysed here in accordance with the neural oscillation model and a corresponding prosodic hierarchy theory. Earlier studies from other researchers show that the Delta bands (1-3Hz) underlie the processing of large prosodic units (full utterance and phrases), Theta bands (4-8Hz) underlie the processing of small prosodic units (syllables) and Gamma bands (>35Hz) underlie the processing of phonemes (distinct consonant sound). Since, the speech stimuli in the uterus mostly consist of low-frequency information, i.e., prosody, the Delta and Theta band were expected to go through the most change. To assess this change, two different analysis methods were used: i) Detrended Fluctuation Analysis and ii) Autocorrelation.

DFA is an analysis method used on time series to measure their statistical self-similarity. DFA uses Long-Range Temporal Correlations (LRTCs) to quantify this self-similarity. The strengths of these correlations are given by the scaling exponent (α). The exponent α signifies if a given state is influenced by the “memory” of a previous state retained by the process generating the state. The value of α broadly gives three possibilities, if α < 0.5 it suggests that previous states not recurring and anti-correlated states are being produced, if α = 0.5 there is no correlation between previous and new states thus the states are uncorrelated, if 0.5 < α < 1it shows positive correlations between states and the system is more likely to a previous state. A human brain EEG shows α ~ 0.72 which shows that it is produced by a self-affine process.

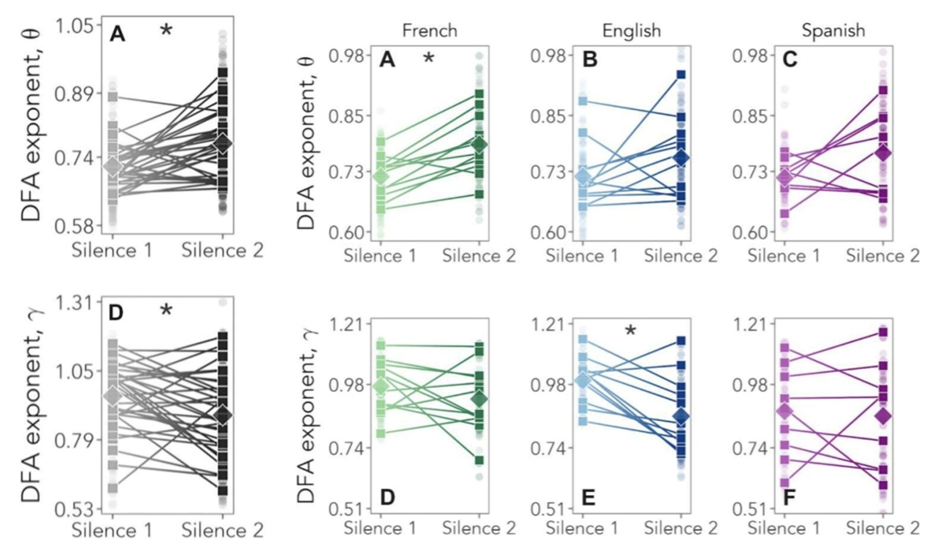

To implement the DFA, recorded EEGs were processed and then Theta and Gamma oscillations were extracted from them. After DFA was conducted on the signals, the Theta band’s average value was seen to be significantly increased from α = 0.76 in silence 1 to α = 0.81 in silence 2. The opposite was observed in Gamma bands where α = 0.95 decreased to α = 0.88. These values conclusively show that exposure to speech is increasing the LRTCs and shaping the brain. The LRCTs increased especially in the Theta bands, thus showing the increased learning of prosodic units, i.e., syllables which are part of the low-frequency sound stimuli in the uterus.

To understand the role of this prenatal experience in language processing, the DFA exponents were separated based on the language last heard by the infant, the same calculations were made but now separately for each set. The results showed that in the Theta bands of infants, there was a significant increase in the exponent of the infant who heard French last, i.e., α=0.76 to α =0.83. The ones whose stimulus ended with Spanish or English showed non-significant. In the gamma bands of the infants, the ones who heard French or Spanish as the last had no significant changes while the ones who heard English as the last language saw a significant decline.

The second approach of autocorrelation was also used and an estimation of the autocorrelation function of the fluctuations of the signal’s temporal correlation were derived. The results showed positive Skewness and Kurtosis of the distribution of the relative change in autocorrelation time. This suggests that language stimulation leads to higher temporal correlations and sustained activity.

These results give us very concrete proof that infants already gain a certain level of learning of language and the mechanisms of language processing are already at work while the infant isn’t even born. This, in a neuroscientific way, shows that infants are already by birth in an optimal state to be able to understand and process speech and language, which explains the reason why newborns are so good at understanding language since their birth.

References

- Mariani, B., Nicoletti, G., Barzon, G., Ortiz Barajas, M. C., Shukla, M., Guevara, R., Suweis, S. S., & Gervain, J. (2023). Prenatal experience with language shapes the brain. Science advances, 9(47), eadj3524. https://doi.org/10.1126/sciadv.adj3524

- Busnel, M. C., Granier-Deferre, C., & Lecanuet, J. P. (1992). Fetal audition. Annals of the New York Academy of Sciences, 662, 118–134. https://doi.org/10.1111/j.1749-6632.1992.tb22857.x

- Moon, C., Lagercrantz, H., & Kuhl, P. K. (2013). Language experienced in utero affects vowel perception after birth: a two-country study. Acta paediatrica (Oslo, Norway : 1992), 102(2), 156–160. https://doi.org/10.1111/apa.12098

- Bahrick L. E. (1988). Intermodal learning in infancy: learning on the basis of two kinds of invariant relations in audible and visible events. Child development, 59(1), 197–209.